We never got the dislike thumbs-down reaction on Facebook. Maybe because it would inevitably have been used for cyber bullying or maybe because a mere dislike wasn’t considered engaging enough? Instead we got five new reactions to express our feelings.

On the flip-side, content creators now have an array of new tools at their disposal for engaging readers and achieving viral spread. When something is F-ed up we no longer have to instruct people to like or share to show sympathy. The new reactions have got us covered.

“We can now achieve viral spread with engaging content that incites love, happiness, astonishment, sadness and anger – or is it hate?

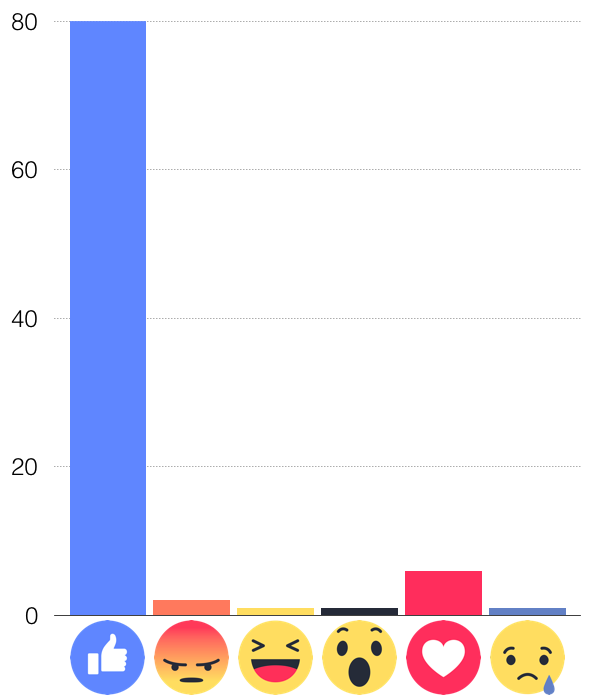

After a year of live testing Facebook released usage statistics for the new reactions.

Facebook was apparently happy with these results, but decided to boost the impact of the new reactions even more in the newsfeed algorithm that decides which content we get to see. We can now achieve viral spread with engaging content that incites love, happiness, astonishment, sadness and anger – or is it hate?

I don’t know what types of content Facebook intended to promote when they designed the angry face reaction and decided to boost that reaction’s impact in the news feed algorithm.

I do know however what kind of content they actually got. Since February I’ve been engaged with monitoring hate speech on social media through the Swedish non-profit watchdog organization Näthatsgranskaren (The Online Hate Crime Monitor).

“Näthatsgranskaren has so far reported more than 1500 hate speech comments to Swedish law enforcement.

The organization uses proprietary software to scan Swedish open Facebook groups and flag illegal hate speech messages. Since February 2017 the group has identified more than two thousand instances of illegal hate speech committed on Swedish language Facebook. Näthatsgranskaren has so far reported more than 1500 hate speech comments to Swedish law enforcement. We have thereby increased the total number of legal complaints regarding social media hate speech in Sweden by an order of magnitude.

During this work we have inevitably gained some insight. One such insight is that social media hate speech does not arise out of nothing. Rather it is often summoned on purpose – or at least as an indirectly voluntary side effect – by hateful or callous site admins and moderators. The original poster of such Facebook threads typically strive to achieve viral spread – to push a political agenda, for monetary gain or out of need for approval. The original posts typically seem to be designed to incite hatred, e.g. towards a minority group – be it an ethnic minority, a religious group or a sexual orientation. The original poster is often vague, likely to avoid hate speech charges or getting the post removed by Facebook for violating community guidelines.

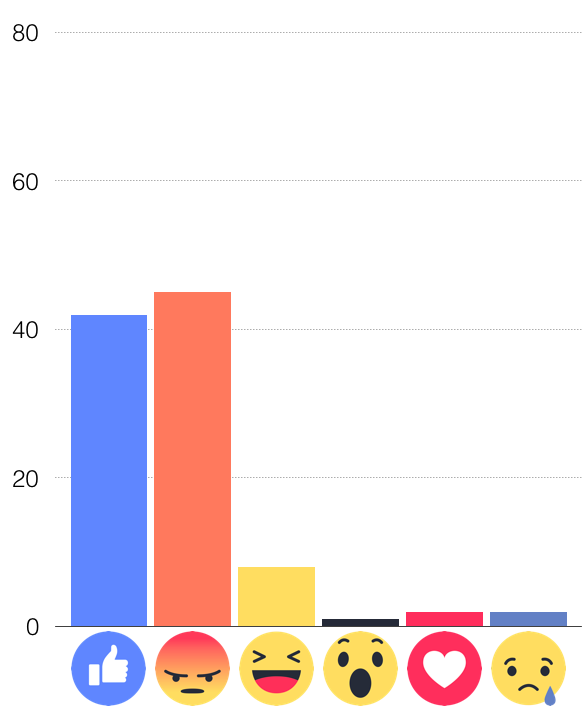

To get a feeling for how well the hate mongers have actually managed to game the newsfeed algorithm I decided to look at the distribution of reaction types on the posts where we have found hate speech comments.

When looking into the distribution of reactions I expected to see more ANGRY and less LOVE reactions compared to the Facebook average.

The result is clear, the number of ANGRY reactions even surpasses the number of traditional likes! Since the new reactions get higher priority in the algorithm than likes I see this as an indication that the posters have very successfully leveraged this new tool.

“Unwittingly Facebook thereby likely drives both the quantity and the spread of illegal hate speech comments.

Considering the context it is not surprising that Facebook posts that incite ANGRY reactions also provoke hate speech comments. What is more controversial is that the Facebook newsfeed algorithm, by weighting the ANGRY reactions higher than normal LIKEs, actually prioritizes this type of hateful content above more balanced posts. Unwittingly Facebook thereby likely drives both the quantity and the spread of illegal hate speech comments.

The above graph is based on a scan of reactions on 178 Facebook posts where we have found a total of 232 hate speech comments. The majority of these posts are from Q2 2017.

The reviewed posts were published on public sites or groups that typically have a high frequency of illegal hate speech content. Out of 178 posts seven posts have since been removed. Of the remaining 171 posts a total of 114 explicitly mention minority groups. 25 posts mention immigration- or refugee policy. 18 are openly critical towards named politicians or political parties. 17 cannot be properly classified because they link to content that has since been removed.

“We can assume that hundreds of thousands, or even millions of Swedes, were exposed to these posts.

Scanning of the posts’ reactions revealed a total of 66757 reactions from 34764 individuals. The quantity of reactions indicates a significant reach for those posts, given that the number of people who are actually exposed to a post in an open group is generally an order of magnitude larger than the number of people who actively react to it. We can assume that hundreds of thousands, or even millions of Swedes, were exposed to these posts. Assuming a similar ratio between reactions and reach as seen on the Näthatsgranskaren page the 171 posts would have been seen by 1-3 million individuals. Those 171 posts constitute only about 10% of the number of posts that Näthatsgranskaren has flagged, which in turn is likely only a fraction of the total number of hateful posts on the Swedish speaking part of Facebook.

The upcoming Swedish general election in September 2018 will be preceded by intense campaigning. The campaign work will include grassroots campaigns on social media and the parties and lobby groups will use all tools at their disposal to get an upper hand. It is reasonable to anticipate that this will lead to an increased leveraging of the Facebook reaction tools, including the ill-fated ANGRY reaction.

“Facebook could easily alleviate the problem by down-prioritizing the ANGRY reaction in the news feed algorithm.

Hate campaigns threaten the democratic rights and the safety of the minority groups that are victimized and this should be enough for the social media platform to take action. Facebook could easily alleviate the problem by down-prioritizing the ANGRY reaction in the news feed algorithm. The ANGRY reaction could still be presented as an option to the facebook user, but inciting hatred would no longer be an efficient way to achieve viral reach.

“the premium brands that finance Facebook’s operations through advertising likely do not want their ads to be seen in a context saturated by illegal hate speech

There are also others that would benefit from a less hateful environment on Facebook, the premium brands that finance Facebook’s operations through advertising likely do not want their ads to be seen in a context saturated by illegal hate speech – be it in the form of racism, sexism, islamophobia or homophobia. Hopefully this also presents a solution to the problem. If Facebook realises that the additional advertising inventory that they create by boosting hateful posts is damaging rather than helpful to their paying clients, they could see this as a threat to Facebook’s own business. If that happens they will likely adjust their algorithm accordingly – protecting the democratic rights of minorities as a side effect.